- Professional Development

- Medicine & Nursing

- Arts & Crafts

- Health & Wellbeing

- Personal Development

54 Data Visualization courses in Glasgow delivered Live Online

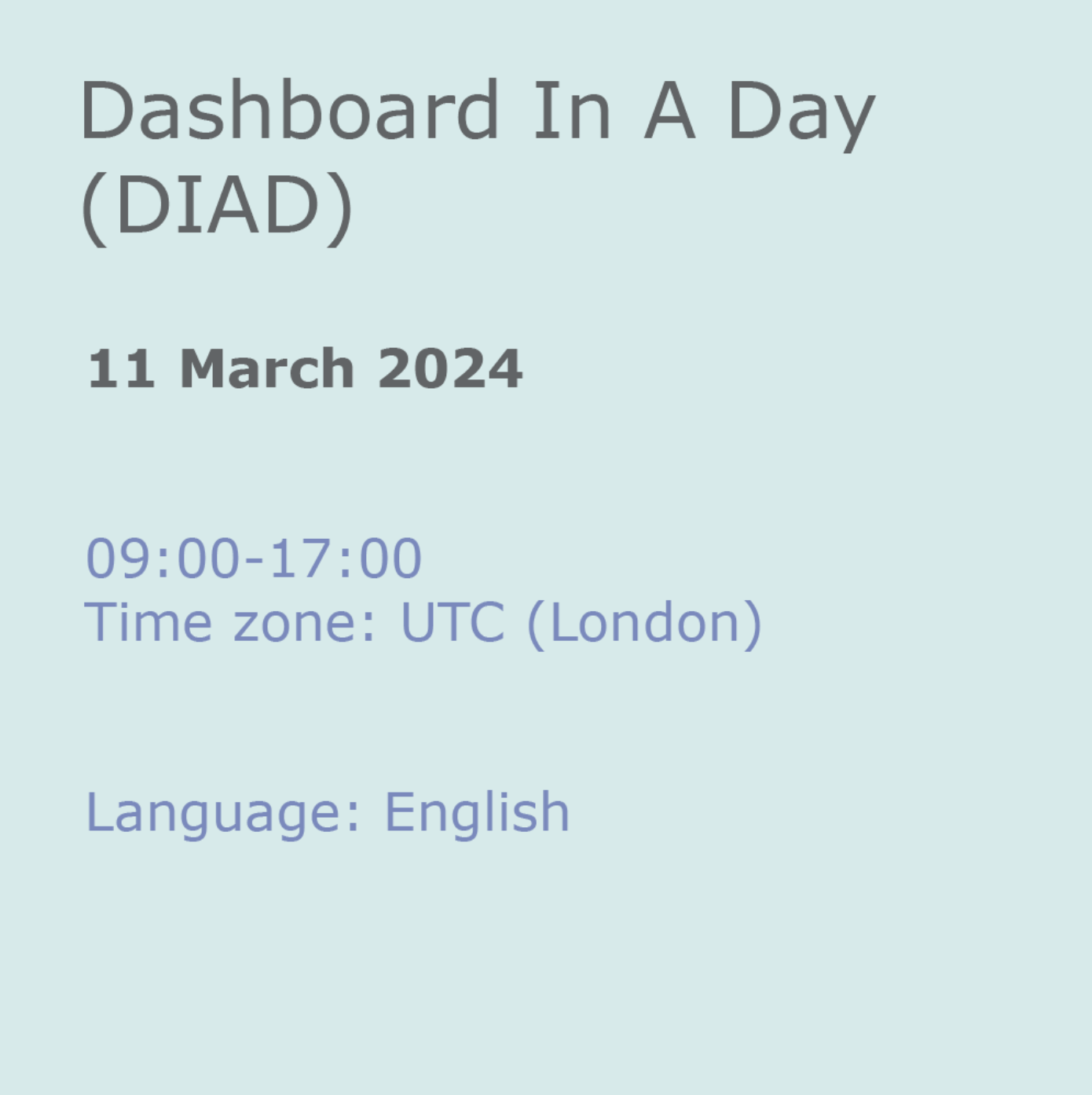

Dashboard In A Day (DIAD)

By Online Productivity Training

OVERVIEW DIAD is a one-day, hands-on workshop for business analysts, covering the breadth of Power BI capabilities. The course focuses on five practical Labs and at the end of the day, attendees will better understand how to: Connect and transform data from a variety of data sources. Define business rules and KPIs. Explore data with powerful interactive visuals. Build stunning reports. Share their dashboards with their team business partners and publish them to the web. The course content is managed by the Power BI engineering team at Microsoft. There is no exam associated with the course. COURSE BENEFITS: Learn how to clean, transform, and load data from various sources Create and manage a data model in Power BI consisting of multiple tables connected with relationships Build Measures and other calculations in the DAX language to plot in reports Manage and share report assets to the Power BI Service WHO IS THE COURSE FOR? Data Analysts and Management Consultants with little or no experience of Power BI who wish to upgrade their knowledge to include Business Intelligence Analysts looking for a quick introduction to Power BI who don’t have the time for the full three day PL-300 course Marketers in data-intensive organisations who need new tools to build visually appealing, dynamic charts for their stakeholders to use LAB OUTLINE Lab 1 Accessing & Preparing The Data Load data from Excel and CSV sources Manipulate the data to prepare it for reporting Prepare tables in Power Query and load them into the data model Lab 2 Data Modelling And Exploration Create a range of different charts Highlight and cross-filter Create new groups and hierarchies Add new measures to the model Lab 3 Data Visualization Add conditional formatting to a report Add logos to a filter Import a custom visual Apply a custom theme Add bookmarks to the report to tell a story Lab 4 Publishing A Report And Creating A Dashboard Create a Workspace in the Power BI Service Publish a report to the Service Create a Dashboard and pin visuals to it Generate and view insights Lab 5 Collaboration Share a Dashboard Access a Dashboard on a Mobile Device

Data storytelling

By Fire Plus Algebra

Data has become the most important resource for every organisation – but the insights gained from data analysis will only ever be truly valuable if they can be clearly expressed to other people. This course is for anybody who works with data, and needs to communicate the meaning that's in the numbers to colleagues, customers, bosses or external stakeholders. It will give you or your team the confidence and skills to translate raw data into compelling visual stories for your key audiences. The principles and skills covered apply to the simplest PowerPoint chart, to more complex interactive visualisations. We’ll work with you before the course to ensure that we understand your organisation and what you’re hoping to achieve. Sample learning content Session 1: What makes a great data-driven story The key elements of a successful infographic or presentation. Industry best practice, and discussion of good (and bad) examples. A simple framework for identifying the Audience, Story and Action. Session 2: Data in context How to balance function and aesthetic appeal. Identifying the right graph, chart, infographic or other visual. Framing the data and providing contextual information. Session 3: Designing for the human brain Using colours to add emphasis and meaning. Design and layout principles, and creating hierarchies of information. The principle of ‘self-sufficiency’, and removing clutter. Session 4: Navigation and narrative Tailoring visualisations for different types of communications. Structuring presentations and longer reports. Thinking in layers to create interactive dashboards. Delivery We deliver our courses over Zoom, to maximise flexibility. The training can be delivered in a single day, or across multiple sessions. All of our courses are live and interactive – every session includes a mix of formal tuition and hands-on exercises. To ensure this is possible, the number of attendees is capped at 16 people. Tutor Alan Rutter is the founder of Fire Plus Algebra. He is a specialist in communicating complex subjects through data visualisation, writing and design. He teaches for General Assembly and runs in-house training for public sector clients including the Home Office, the Department of Transport, the Biotechnology and Biological Sciences Research Council, the Health Foundation, and numerous local government and emergency services teams. He previously worked with Guardian Masterclasses on curating and delivering new course strands, including developing and teaching their B2B data visualisation courses. He oversaw the iPad edition launches of Wired, GQ, Vanity Fair and Vogue in the UK, and has worked with Condé Nast International as product owner on a bespoke digital asset management system for their 11 global markets. Testimonial “I was familiar with Alan’s work as a Guardian Masterclass instructor on data visualisation and digital journalism, which made it easy for me to recommend him for onsite training at the Liverpool School of Tropical Medicine. We had a large group of people interested in honing their abilities to depict their research and stories in engaging ways. Alan’s course provided great insight about common communication pitfalls and how to avoid them, how to become better communicators by understanding the audience diversity, and it showcased some great online tools for creating infographics. This should be mandatory training for all students, academics, report writers and those involved with conveying research to the media as it will help increase the clarity and accessibility of our own research stories.” Dr Lee Haines | Liverpool School of Tropical Medicine

M20778 Analyzing Data with Power BI

By Nexus Human

Duration 3 Days 18 CPD hours This course is intended for The course will likely be attended by SQL Server report creators who are interested in alternative methods of presenting data. Overview After completing this course, students will be able to: ? Perform Power BI desktop data transformation. ? Describe Power BI desktop modelling. ? Create a Power BI desktop visualization. ? Implement the Power BI service. ? Describe how to connect to Excel data. ? Describe how to collaborate with Power BI data. ? Connect directly to data stores. ? Describe the Power BI developer API. ? Describe the Power BI mobile app. The main purpose of the course is to give students a good understanding of data analysis with Power BI. The course includes creating visualizations, the Power BI Service, and the Power BI Mobile App. Introduction to Self-Service BI Solutions Introduction to business intelligence Introduction to data analysis Introduction to data visualization Overview of self-service BI Considerations for self-service BI Microsoft tools for self-service BI Lab : Exploring an Enterprise BI solution Introducing Power BI Power BI The Power BI service Lab : Creating a Power BI dashboard Power BI Using Excel as a data source for Power BI The Power BI data model Using databases as a data source for Power BI The Power BI service Lab : Importing data into Power BI Shaping and Combining Data Power BI desktop queries Shaping data Combining data Lab : Shaping and combining data Modelling data Relationships DAX queries Calculations and measures Lab : Modelling Data Interactive Data Visualizations Creating Power BI reports Managing a Power BI solution Lab : Creating a Power BI report Direct Connectivity Cloud data Connecting to analysis services Lab : Direct Connectivity Developer API The developer API Custom visuals Lab : Using the developer API Power BI mobile app The Power BI mobile app Using the Power BI mobile app Power BI embedded

Advanced C Plus Plus

By Nexus Human

Duration 3 Days 18 CPD hours This course is intended for If you have worked in C++ but want to learn how to make the most of this language, especially for large projects, this course is for you. Overview By the end of this course, you'll have developed programming skills that will set you apart from other C++ programmers. After completing this course, you will be able to: Delve into the anatomy and workflow of C++ Study the pros and cons of different approaches to coding in C++ Test, run, and debug your programs Link object files as a dynamic library Use templates, SFINAE, constexpr if expressions and variadic templates Apply best practice to resource management This course begins with advanced C++ concepts by helping you decipher the sophisticated C++ type system and understand how various stages of compilation convert source code to object code. You'll then learn how to recognize the tools that need to be used in order to control the flow of execution, capture data, and pass data around. By creating small models, you'll even discover how to use advanced lambdas and captures and express common API design patterns in C++. As you cover later lessons, you'll explore ways to optimize your code by learning about memory alignment, cache access, and the time a program takes to run. The concluding lesson will help you to maximize performance by understanding modern CPU branch prediction and how to make your code cache-friendly. Anatomy of Portable C++ Software Managing C++ Projects Writing Readable Code No Ducks Allowed ? Types and Deduction C++ Types Creating User Types Structuring our Code No Ducks Allowed ? Templates and Deduction Inheritance, Polymorphism, and Interfaces Templates ? Generic Programming Type Aliases ? typedef and using Class Templates No Leaks Allowed ? Exceptions and Resources Exceptions in C++ RAII and the STL Move Semantics Name Lookup Caveat Emptor Separation of Concerns ? Software Architecture, Functions, and Variadic Templates Function Objects and Lambda Expressions Variadic Templates The Philosophers' Dinner ? Threads and Concurrency Synchronous, Asynchronous, and Threaded Execution Review Synchronization, Data Hazards, and Race Conditions Future, Promises, and Async Streams and I/O File I/O Implementation Classes String I/O Implementation I/O Manipulators Making Additional Streams Using Macros Everybody Falls, It's How You Get Back Up ? Testing and Debugging Assertions Unit Testing and Mock Testing Understanding Exception Handling Breakpoints, Watchpoints, and Data Visualization Need for Speed ? Performance and Optimization Performance Measurement Runtime Profiling Optimization Strategies Cache Friendly Code

AWS Building Data Lakes on AWS

By Nexus Human

Duration 1 Days 6 CPD hours This course is intended for This course is intended for: Data platform engineers Solutions architects IT professionals Overview In this course, you will learn to: Apply data lake methodologies in planning and designing a data lake Articulate the components and services required for building an AWS data lake Secure a data lake with appropriate permission Ingest, store, and transform data in a data lake Query, analyze, and visualize data within a data lake In this course, you will learn how to build an operational data lake that supports analysis of both structured and unstructured data. You will learn the components and functionality of the services involved in creating a data lake. You will use AWS Lake Formation to build a data lake, AWS Glue to build a data catalog, and Amazon Athena to analyze data. The course lectures and labs further your learning with the exploration of several common data lake Introduction to data lakes Describe the value of data lakes Compare data lakes and data warehouses Describe the components of a data lake Recognize common architectures built on data lakes Data ingestion, cataloging, and preparation Describe the relationship between data lake storage and data ingestion Describe AWS Glue crawlers and how they are used to create a data catalog Identify data formatting, partitioning, and compression for efficient storage and query Lab 1: Set up a simple data lake Data processing and analytics Recognize how data processing applies to a data lake Use AWS Glue to process data within a data lake Describe how to use Amazon Athena to analyze data in a data lake Building a data lake with AWS Lake Formation Describe the features and benefits of AWS Lake Formation Use AWS Lake Formation to create a data lake Understand the AWS Lake Formation security model Lab 2: Build a data lake using AWS Lake Formation Additional Lake Formation configurations Automate AWS Lake Formation using blueprints and workflows Apply security and access controls to AWS Lake Formation Match records with AWS Lake Formation FindMatches Visualize data with Amazon QuickSight Lab 3: Automate data lake creation using AWS Lake Formation blueprints Lab 4: Data visualization using Amazon QuickSight Architecture and course review Post course knowledge check Architecture review Course review

Overview Data and visual analytics are emerging fields concerned with analysing, modelling, and visualizing complex high-dimensional data. It can be analysed and visualised with many languages like Python, R Programming and more. This course will help to attain the skills and give in-depth knowledge to the participant's enhanced way of modelling, analysing and visualizing techniques. The course will highlight practical challenges including composite real-world data and will also comprise several practical studies

R Programming for Data Science (v1.0)

By Nexus Human

Duration 5 Days 30 CPD hours This course is intended for This course is designed for students who want to learn the R programming language, particularly students who want to leverage R for data analysis and data science tasks in their organization. The course is also designed for students with an interest in applying statistics to real-world problems. A typical student in this course should have several years of experience with computing technology, along with a proficiency in at least one other programming language. Overview In this course, you will use R to perform common data science tasks.You will: Set up an R development environment and execute simple code. Perform operations on atomic data types in R, including characters, numbers, and logicals. Perform operations on data structures in R, including vectors, lists, and data frames. Write conditional statements and loops. Structure code for reuse with functions and packages. Manage data by loading and saving datasets, manipulating data frames, and more. Analyze data through exploratory analysis, statistical analysis, and more. Create and format data visualizations using base R and ggplot2. Create simple statistical models from data. In our data-driven world, organizations need the right tools to extract valuable insights from that data. The R programming language is one of the tools at the forefront of data science. Its robust set of packages and statistical functions makes it a powerful choice for analyzing data, manipulating data, performing statistical tests on data, and creating predictive models from data. Likewise, R is notable for its strong data visualization tools, enabling you to create high-quality graphs and plots that are incredibly customizable. This course will teach you the fundamentals of programming in R to get you started. It will also teach you how to use R to perform common data science tasks and achieve data-driven results for the business. Lesson 1: Setting Up R and Executing Simple Code Topic A: Set Up the R Development Environment Topic B: Write R Statements Lesson 2: Processing Atomic Data Types Topic A: Process Characters Topic B: Process Numbers Topic C: Process Logicals Lesson 3: Processing Data Structures Topic A: Process Vectors Topic B: Process Factors Topic C: Process Data Frames Topic D: Subset Data Structures Lesson 4: Writing Conditional Statements and Loops Topic A: Write Conditional Statements Topic B: Write Loops Lesson 5: Structuring Code for Reuse Topic A: Define and Call Functions Topic B: Apply Loop Functions Topic C: Manage R Packages Lesson 6: Managing Data in R Topic A: Load Data Topic B: Save Data Topic C: Manipulate Data Frames Using Base R Topic D: Manipulate Data Frames Using dplyr Topic E: Handle Dates and Times Lesson 7: Analyzing Data in R Topic A: Examine Data Topic B: Explore the Underlying Distribution of Data Topic C: Identify Missing Values Lesson 8: Visualizing Data in R Topic A: Plot Data Using Base R Functions Topic B: Plot Data Using ggplot2 Topic C: Format Plots in ggplot2 Topic D: Create Combination Plots Lesson 9: Modeling Data in R Topic A: Create Statistical Models in R Topic B: Create Machine Learning Models in R

Building Data Lakes on AWS

By Nexus Human

Duration 1 Days 6 CPD hours This course is intended for This course is intended for: Data platform engineers Solutions architects IT professionals Overview In this course, you will learn to: Apply data lake methodologies in planning and designing a data lake Articulate the components and services required for building an AWS data lake Secure a data lake with appropriate permission Ingest, store, and transform data in a data lake Query, analyze, and visualize data within a data lake In this course, you will learn how to build an operational data lake that supports analysis of both structured and unstructured data. You will learn the components and functionality of the services involved in creating a data lake. You will use AWS Lake Formation to build a data lake, AWS Glue to build a data catalog, and Amazon Athena to analyze data. The course lectures and labs further your learning with the exploration of several common data lake architectures. Module 1: Introduction to data lakes Describe the value of data lakes Compare data lakes and data warehouses Describe the components of a data lake Recognize common architectures built on data lakes Module 2: Data ingestion, cataloging, and preparation Describe the relationship between data lake storage and data ingestion Describe AWS Glue crawlers and how they are used to create a data catalog Identify data formatting, partitioning, and compression for efficient storage and query Lab 1: Set up a simple data lake Module 3: Data processing and analytics Recognize how data processing applies to a data lake Use AWS Glue to process data within a data lake Describe how to use Amazon Athena to analyze data in a data lake Module 4: Building a data lake with AWS Lake Formation Describe the features and benefits of AWS Lake Formation Use AWS Lake Formation to create a data lake Understand the AWS Lake Formation security model Lab 2: Build a data lake using AWS Lake Formation Module 5: Additional Lake Formation configurations Automate AWS Lake Formation using blueprints and workflows Apply security and access controls to AWS Lake Formation Match records with AWS Lake Formation FindMatches Visualize data with Amazon QuickSight Lab 3: Automate data lake creation using AWS Lake Formation blueprints Lab 4: Data visualization using Amazon QuickSight Module 6: Architecture and course review Post course knowledge check Architecture review Course review Additional course details: Nexus Humans Building Data Lakes on AWS training program is a workshop that presents an invigorating mix of sessions, lessons, and masterclasses meticulously crafted to propel your learning expedition forward. This immersive bootcamp-style experience boasts interactive lectures, hands-on labs, and collaborative hackathons, all strategically designed to fortify fundamental concepts. Guided by seasoned coaches, each session offers priceless insights and practical skills crucial for honing your expertise. Whether you're stepping into the realm of professional skills or a seasoned professional, this comprehensive course ensures you're equipped with the knowledge and prowess necessary for success. While we feel this is the best course for the Building Data Lakes on AWS course and one of our Top 10 we encourage you to read the course outline to make sure it is the right content for you. Additionally, private sessions, closed classes or dedicated events are available both live online and at our training centres in Dublin and London, as well as at your offices anywhere in the UK, Ireland or across EMEA.

Dashboard design

By Fire Plus Algebra

Data dashboards provide key information to stakeholders so that they can make informed decisions. While there are plenty of software solutions for building these essential data products, there is much less guidance on how to design dashboards to meet the diverse needs of users. This course is for anyone who is building or implementing dashboards, and wants to know more about design principles and best practice. You could be using business intelligence software (such as Power BI or Tableau), or implementing bespoke solutions. The course will give your team the ability to evaluate user needs and levels of understanding, make informed decisions about chart selections, and make effective use of interactivity dynamic data. We’ll work with you before the course to ensure that we understand your organisation and what you’re hoping to achieve. Sample learning content Session 1: Data with a purpose Understanding the different types of dashboard. Information overload and other common dashboard pitfalls. Assessing user needs and levels of data fluency. Session 2: Planning a dashboard Assessing diverse user needs and levels of data fluency. Taking a User Experience (UX) approach to design and navigation. Applying an interative and collaborative approach to onboarding. Session 3: Graphs, charts and dials Understanding how graphical perception informs chart choices. Making intelligent design choices to help users explore. Design principles for layout and navigation. Session 4: Using interactivity Making effective use of filters to slice and dice data sets. Using layers of information to enable drilldown data exploration. Complenting dashboards with automated alerts and queries. Delivery We deliver our courses over Zoom, to maximise flexibility. The training can be delivered in a single day, or across multiple sessions. All of our courses are live and interactive – every session includes a mix of formal tuition and hands-on exercises. To ensure this is possible, the number of attendees is capped at 16 people. Tutor Alan Rutter is the founder of Fire Plus Algebra. He is a specialist in communicating complex subjects through data visualisation, writing and design. He teaches for General Assembly and runs in-house training for public sector clients including the Home Office, the Department of Transport, the Biotechnology and Biological Sciences Research Council, the Health Foundation, and numerous local government and emergency services teams. He previously worked with Guardian Masterclasses on curating and delivering new course strands, including developing and teaching their B2B data visualisation courses. He oversaw the iPad edition launches of Wired, GQ, Vanity Fair and Vogue in the UK, and has worked with Condé Nast International as product owner on a bespoke digital asset management system for their 11 global markets. Testimonial “Alan was great to work with, he took us through the concepts behind data visualisation which means our team is now equipped for the future. He has a wide range of experience across the topic that is delivered in a clear, concise and friendly manner. We look forward to working with Alan again in the future.” John Masterson | Chief Product Officer | ImproveWell

Tableau Desktop - Part 1

By Nexus Human

Duration 2 Days 12 CPD hours Overview Identify and configure basic functions of Tableau. Connect to data sources, import data into Tableau, and save Tableau files Create views and customize data in visualizations. Manage, sort, and group data. Save and share data sources and workbooks. Filter data in views. Customize visualizations with annotations, highlights, and advanced features. Create and enhance dashboards in Tableau. Create and enhance stories in Tableau As technology progresses and becomes more interwoven with our businesses and lives, more and more data is collected about business and personal activities. This era of "big data" has exploded due to the rise of cloud computing, which provides an abundance of computational power and storage, allowing organizations of all sorts to capture and store data. Leveraging that data effectively can provide timely insights and competitive advantage. The creation of data-backed visualizations is a key way data scientists, or any professional, can explore, analyze, and report insights and trends from data. Tableau© software is designed for this purpose. Tableau was built to connect to a wide range of data sources and allows users to quickly create visualizations of connected data to gain insights, show trends, and create reports. Tableau's data connection capabilities and visualization features go far beyond those that can be found in spreadsheets, allowing users to create compelling and interactive worksheets, dashboards, and stories that bring data to life and turn data into thoughtful action. Prerequisites To ensure your success in this course, you should have experience managing data with Microsoft© Excel© or Google Sheets?. Lesson 1: Tableau Fundamentals Topic A: Overview of Tableau Topic B: Navigate and Configure Tableau Lesson 2: Connecting to and Preparing Data Topic A: Connect to Data Topic B: Build a Data Model Topic C: Save Workbook Files Topic D: Prepare Data for Analysis Lesson 3: Exploring Data Topic A: Create Views Topic B: Customize Data in Visualizations Lesson 4: Managing, Sorting, and Grouping Data Topic A: Adjust Fields Topic B: Sort Data Topic C: Group Data Lesson 5: Saving, Publishing, and Sharing Data Topic A: Save Data Sources Topic B: Publish Data Sources and Visualizations Topic C: Share Workbooks for Collaboration Lesson 6: Filtering Data Topic A: Configure Worksheet Filters Topic B: Apply Advanced Filter Options Topic C: Create Interactive Filters Lesson 7: Customizing Visualizations Topic A: Format and Annotate Views Topic B: Emphasize Data in Visualizations Topic C: Create Animated Workbooks Topic D: Best Practices for Visual Design Lesson 8: Creating Dashboards in Tableau Topic A: Create Dashboards Topic B: Enhance Dashboards with Actions Topic C: Create Mobile Dashboards Lesson 9: Creating Stories in Tableau Topic A: Create Stories Topic B: Enhance Stories with Tooltips