- Professional Development

- Medicine & Nursing

- Arts & Crafts

- Health & Wellbeing

- Personal Development

3518 Engineering courses

Kubernetes Administration

By Nexus Human

Duration 4 Days 24 CPD hours Overview Topics Include:Installation of a multi-node Kubernetes cluster using kubeadm, and how to grow a cluster.Choosing and implementing cluster networking.Various methods of application lifecycle management, including scaling, updates and roll-backs.Configuring security both for the cluster as well as containers.Managing storage available to containers.Learn monitoring, logging and troubleshooting of containers and the cluster.Configure scheduling and affinity of container deployments.Use Helm and Charts to automate application deployment.Understand Federation for fault-tolerance and higher availability. In this vendor agnostic course, you'll learn the installation, configuration and administration of a production-grade Kubernetes cluster. Introduction Linux Foundation Linux Foundation Training Linux Foundation Certifications Laboratory Exercises, Solutions and Resources Distribution Details Labs Basics of Kubernetes Define Kubernetes Cluster Structure Adoption Project Governance and CNCF Labs Installation and Configuration Getting Started With Kubernetes Minikube kubeadm More Installation Tools Labs Kubernetes Architecture Kubernetes Architecture Networking Other Cluster Systems Labs APIs and Access API Access Annotations Working with A Simple Pod kubectl and API Swagger and OpenAPI Labs API Objects API Objects The v1 Group API Resources RBAC APIs Labs Managing State With Deployments Deployment Overview Managing Deployment States Deployments and Replica Sets DaemonSets Labels Labs Services Overview Accessing Services DNS Labs Volumes and Data Volumes Overview Volumes Persistent Volumes Passing Data To Pods ConfigMaps Labs Ingress Overview Ingress Controller Ingress Rules Labs Scheduling Overview Scheduler Settings Policies Affinity Rules Taints and Tolerations Labs Logging and Troubleshooting Overview Troubleshooting Flow Basic Start Sequence Monitoring Logging Troubleshooting Resources Labs Custom Resource Definition Overview Custom Resource Definitions Aggregated APIs Labs Kubernetes Federation Overview Federated Resources Labs Helm Overview Helm Using Helm Labs Security Overview Accessing the API Authentication and Authorization Admission Controller Pod Policies Network Policies Labs

ISTQB Software Testing Certification Training - Foundation Level (CTFL)

By Nexus Human

Duration 3 Days 18 CPD hours This course is intended for The target audience for this course includes: Software testers (both technical and user acceptance testers), Test analysts, Test engineers, Test consultants, Software developers, Managers including test managers, project managers, quality managers. Overview By the end of this course, an attendee should be able to: perform effective testing of software, be aware of techniques and standards, have an awareness of what testing tools can achieve, where to find more information about testing, and establish the basic steps of the testing process. This is an ISTQB certification in software testing for the US. In this course you will study all of the basic aspects of software testing and QA, including a comprehensive overview of tasks, methods, and techniques for effectively testing software. This course prepares you for the ISTQB Foundation Level exam. Passing the exam will grant you an ISTQB CTFL certification. Fundamentals of Testing What is Testing? Typical Objectives of Testing Testing and Debugging Why is Testing Necessary? Testing?s Contributions to Success Quality Assurance and Testing Errors, Defects, and Failures Defects, Root Causes and Effects Seven Testing Principles Test Process Test Process in Context Test Activities and Tasks Test Work Products Traceability between the Test Basis and Test Work Products The Psychology of Testing Human Psychology and Testing Tester?s and Developer?s Mindsets Testing Throughout the Software Development Lifecycle Software Development Lifecycle Models Software Development and Software Testing Software Development Lifecycle Models in Context Test Levels Component Testing Integration Testing System Testing Acceptance Testing Test Types Functional Testing Non-functional Testing White-box Testing Change-related Testing Test Types and Test Levels Maintenance Testing Triggers for Maintenance Impact Analysis for Maintenance Static Testing Static Testing Basics Work Products that Can Be Examined by Static Testing Benefits of Static Testing Differences between Static and Dynamic Testing Review Process Work Product Review Process Roles and responsibilities in a formal review Review Types Applying Review Techniques Success Factors for Reviews Test Techniques Categories of Test Techniques Choosing Test Techniques Categories of Test Techniques and Their Characteristics Black-box Test Techniques Equivalence Partitioning Boundary Value Analysis Decision Table Testing State Transition Testing Use Case Testing White-box Test Techniques Statement Testing and Coverage Decision Testing and Coverage The Value of Statement and Decision Testing Experience-based Test Techniques Error Guessing Exploratory Testing Checklist-based Testing Test Management Test Organization Independent Testing Tasks of a Test Manager and Tester Test Planning and Estimation Purpose and Content of a Test Plan Test Strategy and Test Approach Entry Criteria and Exit Criteria (Definition of Ready and Definition of Done) Test Execution Schedule Factors Influencing the Test Effort Test Estimation Techniques Test Monitoring and Control Metrics Used in Testing Purposes, Contents, and Audiences for Test Reports Configuration Management Risks and Testing Definition of Risk Product and Project Risks Risk-based Testing and Product Quality Defect Management Tool Support for Testing Test Tool Considerations Test Tool Classification Benefits and Risks of Test Automation Special Considerations for Test Execution and Test Management Tools Effective Use of Tools Main Principles for Tool Selection Pilot Projects for Introducing a Tool into an Organization Success Factors for Tools Additional course details: Nexus Humans ISTQB Software Testing Certification Training - Foundation Level (CTFL) training program is a workshop that presents an invigorating mix of sessions, lessons, and masterclasses meticulously crafted to propel your learning expedition forward. This immersive bootcamp-style experience boasts interactive lectures, hands-on labs, and collaborative hackathons, all strategically designed to fortify fundamental concepts. Guided by seasoned coaches, each session offers priceless insights and practical skills crucial for honing your expertise. Whether you're stepping into the realm of professional skills or a seasoned professional, this comprehensive course ensures you're equipped with the knowledge and prowess necessary for success. While we feel this is the best course for the ISTQB Software Testing Certification Training - Foundation Level (CTFL) course and one of our Top 10 we encourage you to read the course outline to make sure it is the right content for you. Additionally, private sessions, closed classes or dedicated events are available both live online and at our training centres in Dublin and London, as well as at your offices anywhere in the UK, Ireland or across EMEA.

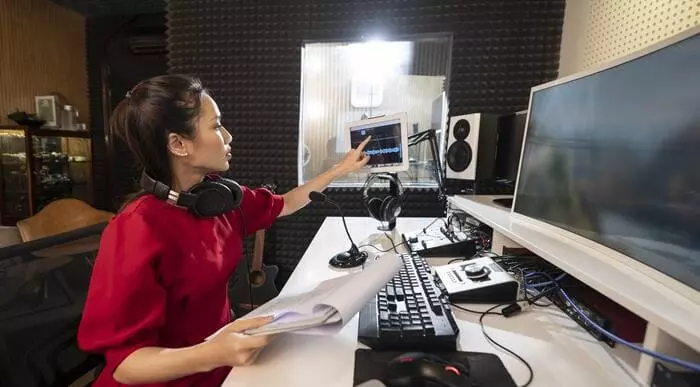

Adobe Audition Training Course

By Study Plex

Highlights of the Course Course Type: Online Learning Duration: 1 to 2 hours Tutor Support: Tutor support is included Customer Support: 24/7 customer support is available Quality Training: The course is designed by an industry expert Recognised Credential: Recognised and Valuable Certification Completion Certificate: Free Course Completion Certificate Included Instalment: 3 Installment Plan on checkout What you will learn from this course? Gain comprehensive knowledge about audio editing Understand the core competencies and principles of audio editing Explore the various areas of audio editing Know how to apply the skills you acquired from this course in a real-life context Become a confident and expert audio editor Adobe Audition Training Course Master the skills you need to propel your career forward in audio editing. This course will equip you with the essential knowledge and skillset that will make you a confident audio editor and take your career to the next level. This comprehensive adobe audition training course is designed to help you surpass your professional goals. The skills and knowledge that you will gain through studying this adobe audition training course will help you get one step closer to your professional aspirations and develop your skills for a rewarding career. This comprehensive course will teach you the theory of effective audio editing practice and equip you with the essential skills, confidence and competence to assist you in the audio editing industry. You'll gain a solid understanding of the core competencies required to drive a successful career in audio editing. This course is designed by industry experts, so you'll gain knowledge and skills based on the latest expertise and best practices. This extensive course is designed for audio editor or for people who are aspiring to specialise in audio editing. Enrol in this adobe audition training course today and take the next step towards your personal and professional goals. Earn industry-recognised credentials to demonstrate your new skills and add extra value to your CV that will help you outshine other candidates. Who is this Course for? This comprehensive adobe audition training course is ideal for anyone wishing to boost their career profile or advance their career in this field by gaining a thorough understanding of the subject. Anyone willing to gain extensive knowledge on this audio editing can also take this course. Whether you are a complete beginner or an aspiring professional, this course will provide you with the necessary skills and professional competence, and open your doors to a wide number of professions within your chosen sector. Entry Requirements This adobe audition training course has no academic prerequisites and is open to students from all academic disciplines. You will, however, need a laptop, desktop, tablet, or smartphone, as well as a reliable internet connection. Assessment This adobe audition training course assesses learners through multiple-choice questions (MCQs). Upon successful completion of the modules, learners must answer MCQs to complete the assessment procedure. Through the MCQs, it is measured how much a learner could grasp from each section. In the assessment pass mark is 60%. Advance Your Career This adobe audition training course will provide you with a fresh opportunity to enter the relevant job market and choose your desired career path. Additionally, you will be able to advance your career, increase your level of competition in your chosen field, and highlight these skills on your resume. Recognised Accreditation This course is accredited by continuing professional development (CPD). CPD UK is globally recognised by employers, professional organisations, and academic institutions, thus a certificate from CPD Certification Service creates value towards your professional goal and achievement. Course Curriculum Adobe Audition For People In A Hurry Welcome to the Complete Adobe Audition CC Course 00:01:00 Are You Ready to Learn the Essentials of Adobe Audition in Less Than 30 Minutes 00:01:00 How to Record Audio, Apply Effects, Save Files, and Export MP3 00:04:00 Secrets to Reducing Time Editing Audio by Recording with A Quality Microphone in A Quiet Studio 00:04:00 Narration Workflow for Quickly Redoing Mistakes with Leaving Silence 00:05:00 Multitrack Sessions for Working with Multiple Audio Files and Advanced Mixing 00:04:00 How To Make Audio Recorded On Your Phone Sound Better in 5 Minutes 00:05:00 You Are On Your Way To Mastering Adobe Audition 00:01:00 Adobe Audition Interface for Beginners Audio Not Recording or Playing Back in Adobe Audition Check Input and Output Devices 00:02:00 Starting New Audio Files, Multitrack Sessions, and Saving Projects 00:08:00 1 Beginner Audio Mistake and Solution 00:08:00 Saving and Exporting Audio Files in Adobe Audition 00:06:00 How To Record Audio in Adobe Audition for Easy Editing by Leaving Silience After Mistakes How To Save Hundreds of Hours Editing 00:08:00 Editing A Voice Recording in Adobe Audition Using Silence to Find and Delete Errors 00:13:00 Punch and Roll Recording in Adobe Audition for Quickly Fixing Narration Mistakes 00:09:00 Multitrack Session Basics with Podcast Template on Adobe Audition Fade Audio In and Out 00:06:00 Copying, Cutting, Splitting, Pasting, and Editing Audio Together in Adobe Audition 00:12:00 Starting a Music Production in Adobe Audition 00:02:00 Noise Reduction with Adobe Audition Capture Noise Print and Removing a Background Airconditioner Best Effects Presets for Beautiful Vocals 00:11:00 Applying The Effects Rack to Add Compression, Limiting, and Equalization in Adobe Audition 00:14:00 Match Loundness on Multiple Files in Adobe Audition with Batch Processing 00:12:00 Time Stretching 00:04:00 Shift Pitch Up And Down For A Good Laugh 00:05:00 Delay and Echo Effects 00:06:00 Spectral Frequency Editing and Pitch Display 00:04:00 Reversing Audio To Create Amazing Sounds 00:02:00 Obtain Your Certificate Order Your Certificate of Achievement 00:00:00 Get Your Insurance Now Get Your Insurance Now 00:00:00 Feedback Feedback 00:00:00

Course Overview Airport management covers a vast area and a wide range of tasks; therefore, it requires a great deal of experience to become a part of the airport management industry. The Airport Management Basics course is created by industry experts to help you grasp the key skills and knowledge to pursue a career in this sector. Through this Airport Management Basics course, you will get introduced to the crucial departments of airport management. The course will teach you how to provide quality customer service in airports. This step by step learning program will instruct you on cargo management and airport components. You will acquire the skills and expertise for security management. The modules will also educate you on airline scheduling, aviation noise control, weather control etc. This Airport Management Basics course is loaded with valuable information and insights into airport management. Enroll in the course and prepare yourself for a promising career in the airport management sector. Learning Outcomes Learn the fundamentals of airport management Get quality training on airport customer service Familiarize yourself with airline scheduling Build essential skills for passenger terminal management and cargo management Enrich your knowledge of aviation noise control Become competent in airport security management Who is this course for? The Airport Management Basics is suitable for anyone interested to pursue a career in airport management Entry Requirement This course is available to all learners, of all academic backgrounds. Learners should be aged 16 or over to undertake the qualification. Good understanding of English language, numeracy and ICT are required to attend this course. Certification After you have successfully completed the course, you will be able to obtain an Accredited Certificate of Achievement. You can however also obtain a Course Completion Certificate following the course completion without sitting for the test. Certificates can be obtained either in hardcopy at the cost of £39 or in PDF format at the cost of £24. PDF certificate's turnaround time is 24 hours, and for the hardcopy certificate, it is 3-9 working days. Why choose us? Affordable, engaging & high-quality e-learning study materials; Tutorial videos/materials from the industry-leading experts; Study in a user-friendly, advanced online learning platform; Efficient exam systems for the assessment and instant result; The UK & internationally recognized accredited qualification; Access to course content on mobile, tablet or desktop from anywhere anytime; The benefit of career advancement opportunities; 24/7 student support via email. Career Path The Airport Management Basics course is a useful qualification to possess and would be beneficial for any related profession or industry such as: Airport manager Airport security director Aviation manager Operations site manager Airport Management Basics Module 01: Introduction to Airport Management 00:16:00 Module 02: Airport Customer Service 00:12:00 Module 03: Passenger Terminal Management 00:16:00 Module 04: Airport Components 00:17:00 Module 05: Airport Peaks and Airline Scheduling 00:18:00 Module 06: Cargo Management 00:19:00 Module 07: Aviation Noise Control 00:11:00 Module 08: Weather Control 00:16:00 Module 09: Sustainable Airport Management 00:17:00 Module 10: Security Management 00:17:00 Module 11: Innovation and Growth 00:16:00 Certificate and Transcript Order Your Certificates and Transcripts 00:00:00

Building Surveying, Construction Management With Construction Site Management

5.0(3)By School Of Health Care

Building Surveying: Building Surveying Would you like to begin acquiring valuable knowledge that will help you differentiate yourself from your rivals? If so, then our special building surveying course could be your perfect choice! This building surveying course covers construction technology, building pathology, regulations, and inspection. Also, the building surveying: building surveying course includes maintenance, heritage conservation, BIM, and health and safety. Moreover, the building surveying: building surveying course describes professional ethics, project management, sustainability, and communication. Students in this building surveying: building surveying course gain skills in defect recognition, risk evaluation, maintenance planning, and client communication. What are you waiting for, then? Enrol in this building surveying course on building surveys right away. Main Course: Building Surveying course Free Course Included Building Surveying: Building Surveying Course Course 01: Construction Management Course 02: Construction Site Management Special Offers of this Building Surveying: Building Surveying Course: This Building Surveying: Building Surveying Course includes a FREE PDF Certificate. Lifetime access to this Building Surveying: Building Surveying Course Instant access to this Building Surveying: Building Surveying Course 24/7 Support Available to this Building Surveying: Building Surveying Course [Note: Free PDF certificate as soon as completing the Building Surveying: Building Surveying Course] Building Surveying: Building Surveying If you want to learn more about the exciting topic of building surveys, sign up for our course. Discover the principles, methods, and best practices of building surveying: building surveying from professionals in the field. Gain knowledge about building surveying: building surveying rules and procedures. Get started now on the path to a fulfilling career in building surveying: building surveying! Certificate of Completion You will receive a course completion certificate for free as soon as you complete the Building Surveying: Building Surveying Course. Who is this course for? Building Surveying: Building Surveying Anyone interested in earning a diploma in this field can take this building surveying: building surveying course. Requirements Building Surveying: Building Surveying To enrol in this Building Surveying: Building Surveying Course, students must fulfil the following requirements: Good Command over English language is mandatory to enrol in our Building Surveying: Building Surveying Course. Be energetic and self-motivated to complete our Building Surveying: Building Surveying Course. Basic computer Skill is required to complete our Building Surveying: Building Surveying Course. If you want to enrol in our Building Surveying: Building Surveying Course, you must be at least 15 years old. Career path Building Surveying: Building Surveying This building surveying: building surveying course provides an exceptional chance to grow professionally and acquire useful skills.

Construction Project Management with Site Management & Construction Management

5.0(3)By School Of Health Care

Site Management: Site Management Course Online Do you have a strong desire to learn this Site Management: Site Management Course? Don't rush; we're here to assist you in improving your comprehension of this Site Management: Site Management Course. The Site Management: Site Management Course provides in-depth insights on how to make a plan, manage time, and budget for a new project. Also, the Site Management: Site Management Course teaches resource allocation for projects. As a learner of construction site management, you can gain practical knowledge of safety protocols and risk management strategies. Moreover, this Site Management: Site Management Course describes conflict resolution techniques. Throughout the Site Management: Site Management Course, you will learn technical and effective communication skills. So, why are you waiting? Participate quickly in our Site Management: Site Management Course to grasp the learning opportunities of the complexities of Site Management: Site Management Course and its significance. Why choose this Site Management: Site Management Course from the School of Health Care? Self-paced Site Management: Site Management Course, access available from anywhere in the world. High-quality study materials that are easy to understand. Site Management: Site Management Course developed by industry experts. After each module Site Management: Site Management Course, there will be an MCQ quiz to assess your learning. Assessment results are generated automatically and instantly. 24/7 support is available via live chat, phone call, or email. Free PDF certificate after completing the Site Management: Site Management Course. Main Course: Construction Site Management Course Free Courses included with Site Management: Site Management Course Course 01: Level 1 Health and Safety in a Construction Environment Course 02: Construction Project Management Course 03: Level 7 Construction Management [ Note: Free PDF certificate as soon as completing the Site Management: Site Management Course] Site Management: Site Management Course Online This Site Management: Site Management Course consists of 15 modules. Course Curriculum of Site Management: Site Management Course Module 01: Introduction to Construction Management Module 02: Construction Site Management Module 03: Equipment procurement plan Module 04: Construction Project Management Module 05: Equipment Planning Module 06: Purchasing and Procurement Management Module 07: Material Management Module 08: Project Planning Module 09: Management of Construction Project Contract Module 10: Human Resource Management Module 11: Health and Safety in Construction Environment Module 12: Working at Height Module 13: Team Building and Management Module 14: First Aid at Construction Site Module 15: Managing Violence at the Workplace Assessment Method of Site Management: Site Management Course After completing Site Management: Site Management Course, you will get quizzes to assess your learning. You will do the later modules upon getting 60% marks on the quiz test. Apart from this, you do not need to sit for any other assessments. Certification of Site Management: Site Management Course After completing the Site Management: Site Management Course, you can instantly download your certificate for FREE. The hard copy of the certification will also be delivered to your doorstep via post, which will cost £13.99. Who is this course for? Site Management: Site Management Course Online For people who are interested in or currently employed in this industry, the Site Management: Site Management Course is highly recommended. Requirements Site Management: Site Management Course Online To enrol in this Site Management: Site Management Course, students must fulfil the following requirements: Good Command over English language is mandatory to enrol in our Site Management: Site Management Course. Be energetic and self-motivated to complete our Site Management: Site Management Course. Basic computer Skill is required to complete our Site Management: Site Management Course. If you want to enrol in our Site Management: Site Management Course, you must be at least 15 years old. Career path Site Management: Site Management Course Online You will be equipped with the information and abilities to investigate fashionable and in-demand construction site manager positions after finishing this Site Management: Site Management Course.

Mixing Audio for Animation in Audacity

By Study Plex

Recognised Accreditation This course is accredited by continuing professional development (CPD). CPD UK is globally recognised by employers, professional organisations, and academic institutions, thus a certificate from CPD Certification Service creates value towards your professional goal and achievement. The Quality Licence Scheme is a brand of the Skills and Education Group, a leading national awarding organisation for providing high-quality vocational qualifications across a wide range of industries. What is CPD? Employers, professional organisations, and academic institutions all recognise CPD, therefore a credential from CPD Certification Service adds value to your professional goals and achievements. Benefits of CPD Improve your employment prospects Boost your job satisfaction Promotes career advancement Enhances your CV Provides you with a competitive edge in the job market Demonstrate your dedication Showcases your professional capabilities What is IPHM? The IPHM is an Accreditation Board that provides Training Providers with international and global accreditation. The Practitioners of Holistic Medicine (IPHM) accreditation is a guarantee of quality and skill. Benefits of IPHM It will help you establish a positive reputation in your chosen field You can join a network and community of successful therapists that are dedicated to providing excellent care to their client You can flaunt this accreditation in your CV It is a worldwide recognised accreditation What is Quality Licence Scheme? This course is endorsed by the Quality Licence Scheme for its high-quality, non-regulated provision and training programmes. The Quality Licence Scheme is a brand of the Skills and Education Group, a leading national awarding organisation for providing high-quality vocational qualifications across a wide range of industries. Benefits of Quality License Scheme Certificate is valuable Provides a competitive edge in your career It will make your CV stand out Course Curriculum Introduction to The Course Introduction 00:02:00 Downloading Audacity 00:01:00 Creating a New Project 00:02:00 Getting Familiar With Audacity Playback And Transport 00:07:00 Zooming And Navegation 00:06:00 Managing Tracks 00:09:00 Showing Waveform And Spectogram 00:06:00 Mono And Stereo Tracks 00:05:00 Tools and Techniques for Recording and Mixing Editing Tracks 00:09:00 Using Labels to Identify Sections 00:06:00 Recording Audio with your Smart Phone and Good Acoustics 00:09:00 Recording Audio Inside Audacity 00:05:00 Cleaning and Improving the Recorded Audio 00:10:00 Compressing to Improve Audio Levels 00:16:00 Editing Audio with the different tools in Audacity 00:12:00 Mixing a Scene - Music and Sound Effects 00:18:00 Adding Dialogue to Finishing off the Scene 00:19:00 Obtain Your Certificate Order Your Certificate of Achievement 00:00:00 Get Your Insurance Now Get Your Insurance Now 00:00:00 Feedback Feedback 00:00:00

VMware Kubernetes Fundamentals and Cluster Operations

By Nexus Human

Duration 4 Days 24 CPD hours This course is intended for Anyone who is preparing to build and run Kubernetes clusters Overview By the end of the course, you should be able to meet the following objectives: Build, test, and publish Docker container images Become familiar with YAML files that define Kubernetes objects Understand Kubernetes core user-facing concepts, including pods, services, and deployments Use kubectl, the Kubernetes CLI, and become familiar with its commands and options Understand the architecture of Kubernetes (Control plane and its components, worker nodes, and kubelet) Learn how to troubleshoot issues with deployments on Kubernetes Apply resource requests, limits, and probes to deployments Manage dynamic application configuration using ConfigMaps and Secrets Deploy other workloads, including DaemonSets, Jobs, and CronJobs Learn about user-facing security using SecurityContext, RBAC, and NetworkPolicies This four-day course is the first step in learning about Containers and Kubernetes Fundamentals and Cluster Operations. Through a series of lectures and lab exercises, the fundamental concepts of containers and Kubernetes are presented and put to practice by containerizing and deploying a two-tier application into Kubernetes. Course Introduction Introductions and objectives Containers What and Why containers Building images Running containers Registry and image management Kubernetes Overview Kubernetes project Plugin interfaces Building Kubernetes Kubectl CLI Beyond Kubernetes Basics Kubernetes objects YAML Pods, replicas, and deployments Services Deployment management Rolling updates Controlling deployments Pod and container configurations Kubernetes Networking Networking within a pod Pod-to-Pod Networking Services to Pods ClusterIP, NodePort, and LoadBalancer Ingress controllers Service Discovery via DNS Stateful Applications in Kubernetes Stateless versus Stateful Volumes Persistent volumes claims StorageClasses StatefulSets Additional Kubernetes Considerations Dynamic configuration ConfigMaps Secrets Jobs, CronJobs Security Network policy Applying a NetworkPolicy SecurityContext runAsUser/Group Service accounts Role-based access control Logging and Monitoring Logging for various objects Sidecar logging Node logging Audit logging Monitoring architecture Monitoring solutions Octant VMware vRealize Operations Manager Cluster Operations Onboarding new applications Backups Upgrading Drain and cordon commands Impact of an upgrade to running applications Troubleshooting commands VMware Tanzu portfolio overview

VMware Tanzu Kubernetes Grid: Install, Configure, Manage [V2.0]

By Nexus Human

Duration 4 Days 24 CPD hours Overview By the end of the course, you should be able to meet the following objectives: Describe how Tanzu Kubernetes Grid fits in the VMware Tanzu portfolio Describe the Tanzu Kubernetes Grid architecture Deploy and manage Tanzu Kubernetes Grid management and supervisor clusters Deploy and manage Tanzu Kubernetes Grid workload clusters Deploy, configure, and manage Tanzu Kubernetes Grid packages Perform basic troubleshooting During this four-day course, you focus on installing VMware Tanzu© Kubernetes Grid? in a VMware vSphere© environment and provisioning and managing Tanzu Kubernetes Grid clusters. The course covers how to install Tanzu Kubernetes Grid packages for image registry, authentication, logging, ingress, multipod network interfaces, service discovery, and monitoring. The concepts learned in this course are transferable for users who must install Tanzu Kubernetes Grid on other supported clouds. Course Introduction Introductions and course logistics Course objectives Introducing VMware Tanzu Kubernetes Grid Identify the VMware Tanzu products responsible for Kubernetes life cycle management and describe the main differences between them Explain the core concepts of Tanzu Kubernetes Grid, including bootstrap, Tanzu Kubernetes Grid management, supervisor, and workload clusters List the components of a Tanzu Kubernetes Grid instance VMware Tanzu Kubernetes Grid CLI and API Illustrate how to use the Tanzu CLI Define the Carvel Tool set Define Cluster API Identify the infrastructure providers List the Cluster API controllers Identify the Cluster API custom resource definitions Authentication Explain how Kubernetes manages authentication with Management clusters Explain how Kubernetes manages authentication with supervisor clusters Define Pinniped Define Dex Describe the Pinniped authentication workflow Load Balancers Illustrate how load balancing works for the Kubernetes control plane Illustrate how load balancing works for application workload Explain how Tanzu Kubernetes Grid integrates with VMware NSX Advanced Load Balancer List load balancing options available on public clouds VMware Tanzu Kubernetes Grid on vSphere List the requirements for deploying a supervisor cluster List the steps to install a Tanzu Kubernetes Grid supervisor cluster Summarize the events of a supervisor cluster creation List the requirements for deploying a management cluster List the steps to install a Tanzu Kubernetes Grid management cluster Summarize the events of a management cluster creation Demonstrate how to use commands when working with management clusters VMware Tanzu Kubernetes Grid on Public Clouds List the requirements for deploying a management cluster on AWS and Microsoft Azure List the configuration options to install a Tanzu Kubernetes Grid a management cluster on AWS and Azure Tanzu Kubernetes Workload Clusters List the steps to build a custom image Describe the available customizations Identify the options for deploying Tanzu Kubernetes Grid clusters Explain the difference between the v1alpha3 and v1beta1 APIs Explain how Tanzu Kubernetes Grid clusters are created Discuss which VMs compose a Tanzu Kubernetes Grid cluster List the pods that run on a Tanzu Kubernetes Grid cluster Describe the Tanzu Kubernetes Grid core add-ons that are installed on a cluster Tanzu Kubernetes Grid Packages Define the Tanzu Kubernetes Grid packages Explain the difference between Auto-Managed and CLI-Managed packages Define packages repositories Configuring and Managing Tanzu Kubernetes Grid Operation and Analytics Packages Describe Cert-Manager Describe the Harbor Image Registry Describe Fluent Bit Identify the logs that Fluent Bit collects Explain basic Fluent Bit configuration Describe Prometheus and Grafana Configuring and Managing Tanzu Kubernetes Grid Networking Packages Describe the Contour ingress controller Demonstrate how to install Contour on a Tanzu Kubernetes Grid cluster Describe ExternalDNS Demonstrate how to install Service Discovery with ExternalDNS Describe Multus CNI Tanzu Kubernetes Grid Day 2 Operations List the load balancer configuration options in vSphere to load balance applications Demonstrate how to configure Ingress with the NodePortLocal Mode Explain how to install VMware Tanzu Application Platform Describe life cycle management in Tanzu Kubernetes Grid Explain how backup and restore are implemented in Tanzu Kubernetes Grid Describe Velero and Restic List the steps to back up a Workload cluster using Velero and Restic Troubleshooting Tanzu Kubernetes Grid Discuss the various Tanzu Kubernetes Grid logs Identify the location of Tanzu Kubernetes Grid logs Explain the purpose of crash diagnostics Demonstrate how to check the health of a Tanzu Kubernetes Grid cluster Explain packages cleanup procedures Explain management recovery procedures Additional course details:Notes Delivery by TDSynex, Exit Certified and New Horizons an VMware Authorised Training Centre (VATC) Nexus Humans VMware Tanzu Kubernetes Grid: Install, Configure, Manage [V2.0] training program is a workshop that presents an invigorating mix of sessions, lessons, and masterclasses meticulously crafted to propel your learning expedition forward. This immersive bootcamp-style experience boasts interactive lectures, hands-on labs, and collaborative hackathons, all strategically designed to fortify fundamental concepts. Guided by seasoned coaches, each session offers priceless insights and practical skills crucial for honing your expertise. Whether you're stepping into the realm of professional skills or a seasoned professional, this comprehensive course ensures you're equipped with the knowledge and prowess necessary for success. While we feel this is the best course for the VMware Tanzu Kubernetes Grid: Install, Configure, Manage [V2.0] course and one of our Top 10 we encourage you to read the course outline to make sure it is the right content for you. Additionally, private sessions, closed classes or dedicated events are available both live online and at our training centres in Dublin and London, as well as at your offices anywhere in the UK, Ireland or across EMEA.

![VMware Tanzu Kubernetes Grid: Install, Configure, Manage [V2.0]](https://cademy-images-io.b-cdn.net/9dd9d42b-e7b9-4598-8d01-a30d0144ae51/4c81f130-71bf-4635-b7c6-375aff235529/original.png?width=3840)

OO220 - Operations Orchestration 10.x Flow Development

By Nexus Human

Duration 4 Days 24 CPD hours This course is intended for This course is recommended for:OperatorsDevelopersAdministrators Overview After completing this course, you should be able to:Run and manage automated workflows using HP Operations Orchestration (OO) 10.xPerform a wide range of system administration, monitoring, and management tasks using OO CentralAuthor, maintain, document, and package new automated workflows using the OO Studio applicationTest and debug the flows locally and remotelyWork with Looping and Iteration operationsApply parallel processing methods to your flows in HP Operations Orchestration (OO)Use responses, rules, and transitions to control flow runUse XML operations and XML filters for processing XML content in OOWork with JavaScript Object Notation (JSON) operationsUse the file system content in the OO libraryAdd email notifications to your flowsExecute scriptlet methods in OO to manage flow data and flow executionInstall, configure, and update OO This four-day course introduces students to the essential concepts and usage, as well as to more advanced features of the HP Operations Orchestration (OO) software. OO is part of HP Cloud Automation solutions. This four-day course introduces students to the essential concepts and usage, as well as to more advanced features of the HP Operations Orchestration (OO) software. OO is part of HP Cloud Automation solutions. Additional course details: Nexus Humans OO220 - Operations Orchestration 10.x Flow Development training program is a workshop that presents an invigorating mix of sessions, lessons, and masterclasses meticulously crafted to propel your learning expedition forward. This immersive bootcamp-style experience boasts interactive lectures, hands-on labs, and collaborative hackathons, all strategically designed to fortify fundamental concepts. Guided by seasoned coaches, each session offers priceless insights and practical skills crucial for honing your expertise. Whether you're stepping into the realm of professional skills or a seasoned professional, this comprehensive course ensures you're equipped with the knowledge and prowess necessary for success. While we feel this is the best course for the OO220 - Operations Orchestration 10.x Flow Development course and one of our Top 10 we encourage you to read the course outline to make sure it is the right content for you. Additionally, private sessions, closed classes or dedicated events are available both live online and at our training centres in Dublin and London, as well as at your offices anywhere in the UK, Ireland or across EMEA.

Search By Location

- Engineering Courses in London

- Engineering Courses in Birmingham

- Engineering Courses in Glasgow

- Engineering Courses in Liverpool

- Engineering Courses in Bristol

- Engineering Courses in Manchester

- Engineering Courses in Sheffield

- Engineering Courses in Leeds

- Engineering Courses in Edinburgh

- Engineering Courses in Leicester

- Engineering Courses in Coventry

- Engineering Courses in Bradford

- Engineering Courses in Cardiff

- Engineering Courses in Belfast

- Engineering Courses in Nottingham